![]()

Création

|

Breaking with the tradition of point and click web browsing, you can navigate through

this unique experience simply by gesturing in front of your device’s camera. This was made

possible using the Discover Movi.Kanti.Revo here: http://www.movikantirevo.com/

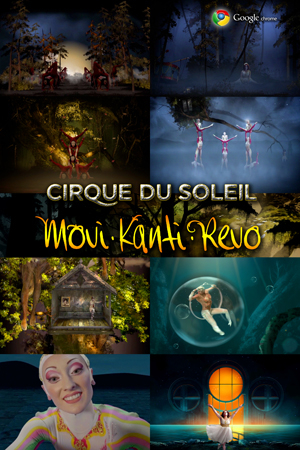

Google Chrome and Cirque du Soleil have partnered to show off the potential of the modern Web with an all-HTML5 Cirque performance that's unique to the Web, called Movi.Kanti.Revo. You can interact with the site by moving your body or speaking to your computer. If that sounds a lot like Microsoft's Kinect to you, you're not alone. But unlike Microsoft's proprietary motion-sensing technology, Movi.Kanti.Revo is fully built in HTML5, CSS3, and JavaScript -- the same tools that power many modern Web sites and a growing number of mobile apps. Cirque du Soleil’s Gillian Ferrabee, creative director for images and special projects, couldn’t recall precisely how Google and Cirque decided to partner, but said that she was instantly impressed with the first meeting between the two companies. “Interactivity and the [Webcam] reading your body were discussed in the first meeting with Google and Particle,” which is the third-party company that built Movi.Kanti.Revo, she said. “We thought, ‘How can we be playful with that?’ We wanted to make it fun to participate, rather than a challenge.”

Pete LePage, a developer advocate for Google's Chrome team, explained that the project came from Google's ongoing interest in creating Chrome Experiments to showcase what Chrome and the modern Web are capable of. The best-known of these to date is probably Google's collaboration with the band The Arcade Fire on an interactive music video called, "The Wilderness Downtown." Unlike that experiment, though, which caught some flack for possibly containing code that prevented it from working properly on browsers that would've otherwise supported it, LePage said that Google wanted to make sure that this one works across all browsers. "We tried on this one to make sure it works across browsers, so for CSS transforms we coded for all the available browsers," he said. Movi.Kanti.Revo code does have browser-specific flags for CSS transforms, but that was just to ensure that browsers that haven't yet built full support for the technology can support it as it comes online, LePage said. Movi.Kanti.Revo will work on most tablets and some smartphones, too, said LePage, because it supports deviceOrientation and deviceMotion, so your device's accelerometer will respond to motion instead of your body.

Cirque's interest in making a Web-based version of their shows dovetailed with Google's interest in showing off modern Web standards, with Chrome as the platform. "Cirque wanted to start building a show that lived beyond their normal performances, and we wanted to use stuff that's just coming online, such as HTML5 and CSS3." Specifically, he said, "we talked about the getUserMedia API to get access to the users' Webcam and microphone." The new HTML5 API getUserMedia, LePage explained, becomes far more useful with WebRTC, a new open-source JavaScript API that allows for real-time communications (RTC) through the Web browser when you give it permission to do so. It allows for the browser to control your computer's Webcam and microphone, and it contains a "communication protocol" that allows media to be sent from and received by your computer. WebRTC has a lot of modern media tools built-in, like support for high-quality audio and video, lost strain compensation, and jitter correction. LePage said that it's already in the Firefox nightly builds, and he said that Opera has plans to support it, too. However, like much of the modern Web, the standard is still developing. "Just landed in Chrome Canary yesterday was response to voice control," he noted. Beyond the technical challenges of building a robust, interactive site with technology that is still under development, Ferrabee enthusiastically added that Movi.Kanti.Revo was a good learning challenge for Cirque du Soleil, too. "The experience, it's almost like a trompe [l'oiel, a trick of the eye]. So, what Particle and Google created, as well as filming some of the acts with the camera moving, is that it replaces the old 3D. It works, and it feels alive." There's more to the project than just overcoming technical and artistic challenges. There's the core question of why anybody would care, beyond Web developers and performance art junkies. Ferrabee explained that she cares on a personal level because she finds it "beautiful and exciting," but she thinks people will respond to Movi.Kanti.Revo because of how it brings technology and art together. "The Web is a big part of our lives, and most people are interested in beauty. I think the project opens doors for people creatively and in their imagination, and demonstrates to them what's possible."

Crafting the 3D WorldThe experiment was created using just HTML5, and the environment is built entirely with markup and CSS. Like set pieces on stage, divs, imgs, small videos and other elements are positioned in a 3D space using CSS. Using the new getUserMedia API enabled a whole new way of interacting with the experiment, instead of using the keyboard or mouse, a JavaScript facial detection library tracks your head and moves the environment along with you. All The Web's a Stage

To build this experiment, it’s best to imagine the browser as a stage, and the

elements like Building the Auditorium

Let’s take a look at the last

scene and to see how it’s put together. All scenes are broken down into

three primary containers, the world container, a perspective container and

the stage. The world container effectively setups up the viewers camera, and

uses the CSS

<div id="world-container">

<div id="perspective-container">

<div id="stage">

</div>

</div>

</div>

#world-container {

perspective: 700px;

overflow: hidden;

}

#perspective-container {

+transform-style: preserve-3d;

+transform-origin: center center;

+perspective-origin: center center;

+transform: translate3d(0, 0, 0) rotateX(0deg)

rotateY(0deg) rotateZ(0deg);

}

Visualizing the Stage

Within the stage, there are seven elements in the final scene. Moving from

back to front, they include the sky background, a fog layer, the doors, the

water, reflections, an additional fog layer, and finally the cliffs in front.

Each item is placed on stage with a

Figure 1 shows the scene zoomed out and rotated almost 90 degrees so that you can visualize the way each of the different set pieces are placed within the stage. At the back (furthest to the left), you can see the background, fog, doors, water and finally the cliffs. Placing the Background on StageLet’s start with the background image. Since it’s the furthest back, we applied a -990px transform on the Z-axis to push it back in our perspective (see Figure 2).

As it moves back in space, physics demands that it gets smaller, so it needs

to be resized via a

.background {

width: 1280px;

height: 800px;

top: 0px;

background-image: url(stars.png);

+transform: translate3d(0, 786px, -990px)

scale(3.3);

}

The fog,

doors, and the

cliffs in the same way, each by applying a

Adding the Sea

To create as realistic an environment as possible, we knew we couldn’t simply

put the water on a vertical plane, it’s typically doesn’t exist that way in

the real world. In addition to applying the

.sea {

bottom: 120px;

background-image: url(sea2.png);

width: 1280px;

height: 283px;

+transform: translate3d(-100px, 225px, -30px)

scale(2.3) rotateX(60deg);

}

Each scene was built in a similar fashion, elements were visualized within the 3D space of a stage, and an appropriate transform was applied to each. Further Reading |

From the Cirque offices in Montreal, Canada, Ferrabee described Movi.Kanti.Revo as

a typical Cirque production, in some ways. You’re invited into a magical world by a

tour guide who speaks in a made-up language, who invites you through a series of

tableaus such as a forest, a desert, and a tree of life. “You have a certain control

over each of those environments. It’s a message of joy and hope and play and the

beauty of life,” she said, and it takes about 10 minutes to explore.

From the Cirque offices in Montreal, Canada, Ferrabee described Movi.Kanti.Revo as

a typical Cirque production, in some ways. You’re invited into a magical world by a

tour guide who speaks in a made-up language, who invites you through a series of

tableaus such as a forest, a desert, and a tree of life. “You have a certain control

over each of those environments. It’s a message of joy and hope and play and the

beauty of life,” she said, and it takes about 10 minutes to explore.